Getting Good Data, Part II (of many)

Okay, so by now you’ve heard dozens and dozens of times that you guys produce really good data: your aggregated answers are 97% correct overall (see here and here and here). But we also know that not all images are equally easy. More specifically, not all species are equally easy. It’s a lot easier to identify a giraffe or zebra than it is to decide between an aardwolf and striped hyena.

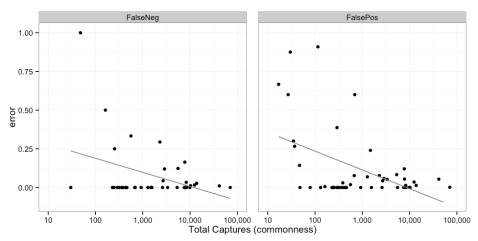

The plot below shows the different error rates for each species. Note that error comes in two forms. You can have a “false negative” which means you miss a species given that it’s truly there. And then you can have a “false positive,” in which you report a species as being there when it really isn’t. Error is a proportion from 0 to 1.

We calculated this by comparing the consensus data to the gold standard dataset that Margaret collated last year. Note that at the bottom of the chart there are a handful of species that don’t have any values for false negatives. That’s because, for statistical reasons, we could only calculate false negative error rates from completely randomly sampled images, and those species are so rare that they didn’t appear in the gold standard dataset. But for false positives, we could randomly sample images from any consensus classification – so I gathered a bunch of images that had been identified as these rare species and checked them to calculate false positive rates.

Now, if a species has really low rates of false negatives and really low rates of false positives, then it’s one people are really good at identifying all the time. Note that in general, species have pretty low rates of both types of error. Furthermore, species with lower rates of false negatives have higher rates of false positives. There aren’t really any species with high rates of both types of error. Take rhinos, for example: folks often identify a rhino when it’s not actually there, but never miss a rhino if it is there.

Also: we see that rare species are just generally harder to identify correctly than common species. The plot below shows the same false negative and false positive error rates plotted against the total number of pictures for every species. Even though there is some noise, those lines reflect significant trends: in general, the more pictures of an animal, the more often folks get it right!

This makes intuitive sense. It’s really hard to get a good “search image” for something you never see. But also folks are especially excited to see something rare. You can see this if you search the talk pages for “rhino” or “zorilla.” Both of these have high false positive rates, meaning people say it’s a rhino or zorilla when it’s really not. Thus, most of the images that show up tagged as these really rare creatures aren’t.

But that’s okay for the science. Because recall that we can assess how confident we are in an answer based on the evenness score, fraction support, and fraction blanks. Because such critters are so rare, we want to be really sure that those IDs are right — but because those animals are so rare, and because you have high levels of agreement on the vast majority of images, it makes it really easy to review any “uncertain” image that’s been ID’d as a rare species.

Pretty cool, huh?

I just tagged a photo as striped hyena when I really wanted to say wild dog, which wasn’t an option.

Art – from seasons 1-6 there were 115 images retired as “striped hyenas.” Based on false positive rates, I’d estimate that only ~15 of those are actually striped hyenas and the rest are aardwolves.

Nancy – can you share the subject ID for that photo? If you come across anything that might be a wild dog, it would be great to tag that on Talk. We don’t know of any wild dogs resident within the camera study area, which is why there is no option in the classification interface.

I’m not sure how to go back to a picture I’ve already done, but i did save a screen shot

Yay, found a serval! (54cfd81687ee0404d5083f95_0.JPG)

That makes up for all the “no animals present” 😉

How many true positives of striped hyena pictures are there?

Very interesting analysis!

From my mod’s-eye viewpoint, one of the species that most often confuses people and results in false positives is the white-tailed mongoose – and that may be because you have NO PICTURE of one in the ID guide, although it must be one of the most commonly captured mongooses. Something to fix for next time?

And…any idea when next season will come up?

AH! David, that is a huge oversight on our part. I’ll make that a priority to update…we definitely need a white-tailed mongoose in the filtering guide…

And…Season 9 will be a ways off, but we’ve got a couple of mini-seasons we’re planning on…more to come on that soon!