More results!

As I’m writing up my dissertation (ahh!), I’ve been geeking out with graphs and statistics (and the beloved/hated stats program R). I thought I’d share a cool little tidbit.

Full disclosure: this is just a bit of an expansion on something I posted back in March about how well the camera traps reflect known densities. Basically, as camera traps become more popular, researchers are increasingly looking for simple analytical techniques that can allow them to rapidly process data. Using the raw number of photographs or animals counted is pretty straightforward, but is risky because not all animals are equally “detectable”: some animals behave in ways that make them more likely to be seen than other animals. There are a lot of more complex methods out there to deal with these detectability issues, and they work really well — but they are really complex and take a long time to work out. So there’s a fair amount of ongoing debate about whether or not raw capture rates should ever be used even for quick and dirty rapid assessments of an area.

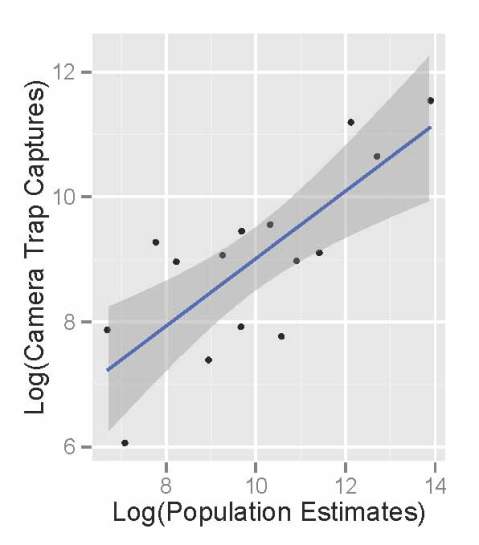

Since the Serengeti has a lot of other long term monitoring, we were able to compare camera trap capture rates (# of photographs weighted by group size) to actual population sizes for 17 different herbivores. Now, it’s not perfect — the “known” population sizes reflect herbivore numbers in the whole park, and we only cover a small fraction of the park. But from the graph below, you’ll see we did pretty well.

Actual herbivore densities (as estimated from long-term monitoring) are given on the x-axis, and the # photographic captures from our camera survey are on the y-axis. Each species is in a different color (migratory animals are in gray-scale). Some of the species had multiple population estimates produced from different monitoring projects — those are represented by all the smaller dots, and connected by a line for each species. We took the average population estimate for each species (bigger dots).

We see a very strong positive relationship between our photos and actual population sizes: we get more photos for species that are more abundant. Which is good! Really good! The dashed line shows the relationship between our capture rates and actual densities for all species. We wanted to make sure, however, that this relationship wasn’t totally dependent on the huge influx of wildebeest and zebra and gazelle — so we ran the same analysis without them. The black line shows that relationship. It’s still there, it’s still strong, and it’s still statistically significant.

Now, the relationship isn’t perfect. Some species fall above the line, and some below the line. For example, reedbuck and topi fall below the line – meaning that given how many topi really live in Serengeti, we should have gotten more pictures. This might be because topi mostly live in the northern and western parts of Serengeti, so we’re just capturing the edge of their range. And reedbuck? This might be a detectability issue — they tend to hide in thickets and so might not pass in front of cameras as often as animals that wander a little more actively.

Ultimately, however, we see that the cameras do a good overall job of catching more photos of more abundant species. Even though it’s not perfect, it seems that raw capture rates give us a pretty good quick look at a system.

What we’ve seen so far, Part III

Over the last few weeks, I’ve shared some of our preliminary findings from Seasons 1-6 here and here. As we’re still wrapping up the final stages of preparation for Season 7, I thought I’d continue in that vein.

One of the coolest things about camera traps is our ability to simultaneously monitor many different animal species all at once. This is a big deal. If we want to protect the world around us, we need to understand how it works. But the world is incredibly complex, and the dynamics of natural systems are driven by many different species interacting with many others. And since some of these critters roam for hundreds or thousands of miles, studying them is really hard.

I have for a while now been really excited about the ability of camera traps to help scientists study all of these different species all at once. But cameras are tricky, because turning those photographs into actual data on species isn’t always straightforward. Some species, for example, seem to really like cameras,

so we see them more often than we really should — meaning we might think there are more of that critter than there really are. There are statistical approaches to deal with this kind of bias in the photos, but these statistics are really complex and time consuming.

This has actually sparked a bit of a debate among researchers who use camera traps. Researchers and conservationists have begun to advocate camera traps as a cost-effective, efficient, and accessible way to quickly survey many understudied, threatened ecosystems around the world. They argue that basic counting of photographs of different species is okay as a first pass to understand what animals are there and how many of them there are. And that requiring the use of the really complex stats might hinder our ability to quickly survey threatened ecosystems.

So, what do we do? Are these simple counts of photographs actually any good? Or do we need to spend months turning them into more accurate numbers?

Snapshot Serengeti is really lucky in that many animals have been studied in Serengeti over the years. Meaning that unlike many camera trap surveys, we can actually check our data against a big pile of existing knowledge. In doing so, we can figure out what sorts of things cameras are good at and what they’re not.

Comparing the raw photographic capture rates of major Serengeti herbivores to their population sizes as estimated in the early 2000’s, we see that the cameras do an okay job of reflecting the relative abundance of different species. The scatterplot below shows the population sizes of 14 major herbivores estimated from Serengeti monitoring projects on the x-axis, and camera trap photograph rates of those herbivores on the y-axis. (We take the logarithm of the value for statistical reasons.) There are really more wildebeest than zebra than buffalo than eland, and we see these patterns in the number of photographs taken.

Like we saw the other week, monthly captures shows that we can get a decent sense of how these relative abundances change through time.

So, by comparing the camera trash photos to known data, we see that they do a pretty good job of sketching out some basics about the animals. But the relationship also isn’t perfect.

So, in the end, I think that our Snapshot Serengeti data suggests that cameras are a fantastic tool and that raw photographic capture rates can be used to quickly develop a rough understanding of new places, especially when researchers need to move quickly. But to actually produce specific numbers, say, how many buffalo per square-km there are, we need to dive in to the more complicated statistics. And that’s okay.

Let the analyses begin!

Truth be told, I *have* been working on data analysis from the start. It’s actually one of my favorite parts of research — piecing together the story from all the different puzzle pieces that have been collected over the years.

But right now I am knee-deep in taking a closer look at the camera trap data. Since we have *so* many cameras taking pictures every day I want to look at where the animals are not just overall, but from day to day, hour to hour. I’m not 100% sure what analytical approaches are out there, but my first step is to simply visualize the data. What does it look like?

So I’ve started making animations within the statistical programming software R. Here’s one of my first ones (stay tuned over the holidays for more). Each frame represents a different hour on the 24 hour clock: 0 is midnight, 12 is noon, 23 is 11pm, etc. Each dot is sized proportionally to the number of captures of that species at that site at that time of day. The dots are set to be a little transparent so you can see when sites are hotspots for multiple species. [*note: if the .gif isn’t animating for you in the blog, try clicking on it so it opens in a new tab.]

It’s pretty clear that there are a handful of “naptime hotspots” on the plains. You can bet your boots that those are nice shady trees in the middle of nowhere that the lions really love.

It’s pretty clear that there are a handful of “naptime hotspots” on the plains. You can bet your boots that those are nice shady trees in the middle of nowhere that the lions really love.

Space and time

If you are a nerd like me, the sheer magnitude of questions that can be addressed with Snapshot Serengeti data is pretty much the coolest thing in the world. Though, admittedly, the jucy lucy is a close second.

The problem with these really cool questions, however, is that they take some rather complicated analyses to answer. And there are a lot of steps along the way. For example, ultimately we hope to understand things like how predator species coexist, how the migration affects resident herbivores, and how complex patterns of predator territoriality coupled with migratory and resident prey drive the stability of the ecosystem… But we first have to be able to turn these snapshots into real information about where different animals are and when they’re there.

That might sound easy. You guys have already done the work of telling us which species are in each picture – and, as Margaret’s data validation analysis shows, you guys are really good at that. So, since we have date, time, and GPS information for each picture, it should be pretty easy to use that, right?

Sort of. On one hand, it’s really easy to create preliminary maps from the raw data. For example, this map shows all the sightings of lions, hyenas, leopards, and cheetahs in the wet and dry seasons. Larger circles mean that more animals were seen there; blank spaces mean that none were.

And it’s pretty easy to map when we’re seeing animals. This graph shows the number of sightings for each hour of the day. On the X-axis, 0 is midnight, 12 is noon, 23 is 11pm.

So we’ve got a good start. But then the question becomes “How well do the cameras reflect actual activity patterns?” And, more importantly, “How do we interpret the camera trap data to understand actual activity patterns?”

For example, take the activity chart above. Let’s look at lions. We know from years and years of watching lions, day and night, that they are a lot more active at night. They hunt, they fight, they play much more at night than during the day. But when we look at this graph, we see a huge number of lion photos taken between hours 10:00 to 12:00. If we didn’t know anything about lions, we might think that lions were really active during that time, when in reality, they’ve simply moved 15 meters over to the nearest tree for shade, and then stayed there. Because we have outside understanding of how these animals move, we’re able to identify sources of bias in the camera trapping data, and account for them so we can get to the answers we’re really looking for.

So far, shade seems to be our biggest obstacle in reconciling how the cameras see the world vs. what is actually going on. I’ve just shown you a bit about how shade affects camera data on when animals are active – next week I’ll talk more about how it affects camera data on where animals are.

Hard to find a better place to nap…