Algorithm vs. Experts

Recently, I’ve been analyzing how good our simple algorithm is for turning volunteer classifications into authoritative species identifications. I’ve written about this algorithm before. Basically, it counts up how many “votes” each species got for every capture event (set of images). Then, species that get more than 50% of the votes are considered the “right” species.

To test how well this algorithm fares against expert classifiers (i.e. people who we know to be very good at correctly identifying animals), I asked a handful of volunteers to classify several thousand randomly selected captures from Season 4. I stopped everyone as soon as I knew 4,000 captures had been looked at, and we ended up with 4,149 captures. I asked the experts to note any captures that they thought were particularly tricky, and I sent these on to Ali for a final classification.

Then I ran the simple algorithm on those same 4,149 captures and compared the experts’ species identifications with the algorithm’s identifications. Here’s what I found:

For a whopping 95.8% of the captures, the simple algorithm (due to the great classifying of all the volunteers!) agrees with the experts. But, I wondered, what’s going on with that other 4.2%. So I had a look:

For a whopping 95.8% of the captures, the simple algorithm (due to the great classifying of all the volunteers!) agrees with the experts. But, I wondered, what’s going on with that other 4.2%. So I had a look:

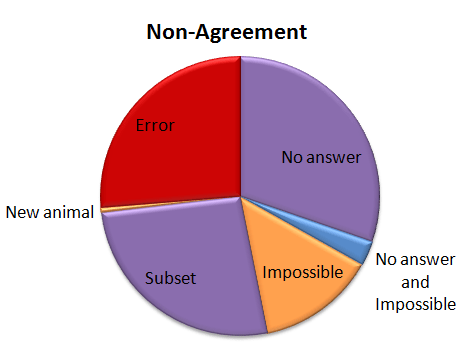

Of the captures that didn’t agree, about 30% were due to the algorithm coming up with no answer, but the experts did. This is “No answer” in the pie chart. The algorithm fails to come up with an answer when the classifications vary so much that there is no single species (or combination if there are multiple species in a capture) that takes more than 50% of the vote. These are probably rather difficult images, though I haven’t looked at them yet.

Of the captures that didn’t agree, about 30% were due to the algorithm coming up with no answer, but the experts did. This is “No answer” in the pie chart. The algorithm fails to come up with an answer when the classifications vary so much that there is no single species (or combination if there are multiple species in a capture) that takes more than 50% of the vote. These are probably rather difficult images, though I haven’t looked at them yet.

Another small group — about 15% of captures was marked as “impossible” by the experts. (This was just 24 captures out of the 4,149.) And five captures were both marked as “impossible” and the algorithm failed to come up with an answer; so in some strange way, we might consider these five captures to be in agreement.

Just over a quarter of the captures didn’t agree because either the experts or the algorithm saw an extra species in a capture. This is labeled as “Subset” in the pie chart. Most of the extra animals were Other Birds or zebras in primarily wildebeest captures or wildebeest in primarily zebra captures. The extra species really is there, it was just missed by the other party. For most of these, it’s the experts who see the extra species.

Then we have our awesome, but difficulty-causing duiker. There was no way for the algorithm to match the experts because we didn’t have “duiker” on the list of animals that volunteers could choose from. I’ve labeled this duiker as “New animal” on the pie chart.

Then the rest of the captures — just over a quarter of them — were what I’d call real errors. Grant’s gazelles mistaken for Tommies. Buffalo mistaken for wildebeest. Aardwolves mistaken for striped hyenas. That sort of thing. They account for just 1.1% of all the 4,149 captures.

I’ve given the above Non-agreement pie chart some hideous colors. The regions in purple are what scientists call Type II errors, or “false negatives.” That is, the algorithm is failing to identify a species that we know is there — either because it comes up with no answer, or because it misses extra species in a capture. I’m not too terribly worried about these Type II errors. The “Subset” ones happen mainly with very common animals (like zebra or wildebeest) or animals that we’re not directly studying (like Other Birds), so they won’t affect our analyses. The “No answers” may mean we miss some rare species, but if we’re analyzing common species, it won’t be a problem to be missing a small fraction of them.

The regions in orange are a little more concerning; these are the Type I errors, or “false positives.” These are images that should be discarded from analysis because there is no useful information in them for the research we want to do. But our algorithm identifies a species in the images anyway. These may be some of the hardest captures to deal with as we work on our algorithm.

And the red-colored errors are obviously a concern, too. The next step is to incorporate some smarts into our simple algorithm. Information about camera location, time of day, and identification of species in captures immediately before or following a capture can give us additional information to try to get that 4.2% non-agreement even smaller.

Not on the A-List

I’m working on an analysis that compares the classifications of volunteers at Snapshot Serengeti with the classifications of experts for several thousand images from Season 4. This analysis will do two things. First, it will give us an idea of how good (or bad) our simple vote-counting method is for figuring out species in pictures. Second, it will allow us to see if more complicated systems for combining the volunteer data work any better. (Hopefully I’ll have something interesting to say about it next week.)

Right now I’m curating the expert classifications. I’ve allowed the experts to classify an image as “impossible,” which, I know, is totally unfair, since Snapshot Serengeti volunteers don’t get that option. But we all recognize that for some images, it really isn’t possible to figure out what the species is — either because it’s too close or too far or too off the side of the image or too blurry or …. The goal is that whatever our combining method is, it should be able to figure out “impossible” images by combining the non-“impossible” classifications of volunteers. We’ll see if we can do it.

Another challenge that I’m just running into is that our data set of several thousand images contains a duiker. A what? A common duiker, also known as a bush duiker:

You’ve probably noticed that “duiker” is not on the list of animals we provide. While the common duiker is widespread, it’s not commonly seen in the Serengeti, being small and active mainly at night. So we forgot to include it on the list. (Sorry about that.)

The result is that it’s technically impossible for volunteers to properly classify this image. Which means that it’s unlikely that we’ll be able to come up with the correct species identification when we combine volunteer classifications. (Interested in what the votes were for this image? 10 reedbuck, 6 dik dik, and 1 each of bushbuck, wildebeest(!), and impala.)

The duiker is not the only animal that’s popped up unexpectedly since we put together the animal list and launched the site. I never expected we’d catch a bat on film:

Our friends over at Bat Detective tell us that the glare on the face makes it impossible to truly identify, but they did confirm that it’s a large, insect-eating bat. Anyway, how to classify it? It’s not a bird. It’s not a rodent. And we didn’t allow for an “other” category.

I also didn’t think we’d see insects or spiders.

Moths fly by, ticks appear on mammal bodies, spiders spin webs in front of the camera and even ants have been seen walking on nearby branches. Again, how should they be classified?

And here’s one more uncommon antelope that we’ve seen:

It’s a steenbok, again not commonly seen in Serengeti. And so we forgot to put it on the list. (Sorry.)

Luckily, all these animals we missed from the list are rare enough in our data that when we analyze thousands of images, the small error in species identification won’t matter much. But it’s good to know that these rarely seen animals are there. When Season 5 comes out (soon!), if you run into anything you think isn’t on our list, please comment in Talk with a hash-tag, so we can make a note of these rarities. Thanks!