Algorithm vs. Experts

Recently, I’ve been analyzing how good our simple algorithm is for turning volunteer classifications into authoritative species identifications. I’ve written about this algorithm before. Basically, it counts up how many “votes” each species got for every capture event (set of images). Then, species that get more than 50% of the votes are considered the “right” species.

To test how well this algorithm fares against expert classifiers (i.e. people who we know to be very good at correctly identifying animals), I asked a handful of volunteers to classify several thousand randomly selected captures from Season 4. I stopped everyone as soon as I knew 4,000 captures had been looked at, and we ended up with 4,149 captures. I asked the experts to note any captures that they thought were particularly tricky, and I sent these on to Ali for a final classification.

Then I ran the simple algorithm on those same 4,149 captures and compared the experts’ species identifications with the algorithm’s identifications. Here’s what I found:

For a whopping 95.8% of the captures, the simple algorithm (due to the great classifying of all the volunteers!) agrees with the experts. But, I wondered, what’s going on with that other 4.2%. So I had a look:

For a whopping 95.8% of the captures, the simple algorithm (due to the great classifying of all the volunteers!) agrees with the experts. But, I wondered, what’s going on with that other 4.2%. So I had a look:

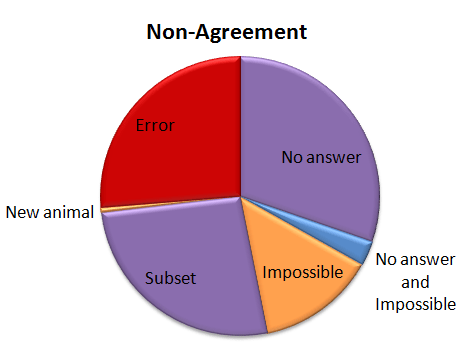

Of the captures that didn’t agree, about 30% were due to the algorithm coming up with no answer, but the experts did. This is “No answer” in the pie chart. The algorithm fails to come up with an answer when the classifications vary so much that there is no single species (or combination if there are multiple species in a capture) that takes more than 50% of the vote. These are probably rather difficult images, though I haven’t looked at them yet.

Of the captures that didn’t agree, about 30% were due to the algorithm coming up with no answer, but the experts did. This is “No answer” in the pie chart. The algorithm fails to come up with an answer when the classifications vary so much that there is no single species (or combination if there are multiple species in a capture) that takes more than 50% of the vote. These are probably rather difficult images, though I haven’t looked at them yet.

Another small group — about 15% of captures was marked as “impossible” by the experts. (This was just 24 captures out of the 4,149.) And five captures were both marked as “impossible” and the algorithm failed to come up with an answer; so in some strange way, we might consider these five captures to be in agreement.

Just over a quarter of the captures didn’t agree because either the experts or the algorithm saw an extra species in a capture. This is labeled as “Subset” in the pie chart. Most of the extra animals were Other Birds or zebras in primarily wildebeest captures or wildebeest in primarily zebra captures. The extra species really is there, it was just missed by the other party. For most of these, it’s the experts who see the extra species.

Then we have our awesome, but difficulty-causing duiker. There was no way for the algorithm to match the experts because we didn’t have “duiker” on the list of animals that volunteers could choose from. I’ve labeled this duiker as “New animal” on the pie chart.

Then the rest of the captures — just over a quarter of them — were what I’d call real errors. Grant’s gazelles mistaken for Tommies. Buffalo mistaken for wildebeest. Aardwolves mistaken for striped hyenas. That sort of thing. They account for just 1.1% of all the 4,149 captures.

I’ve given the above Non-agreement pie chart some hideous colors. The regions in purple are what scientists call Type II errors, or “false negatives.” That is, the algorithm is failing to identify a species that we know is there — either because it comes up with no answer, or because it misses extra species in a capture. I’m not too terribly worried about these Type II errors. The “Subset” ones happen mainly with very common animals (like zebra or wildebeest) or animals that we’re not directly studying (like Other Birds), so they won’t affect our analyses. The “No answers” may mean we miss some rare species, but if we’re analyzing common species, it won’t be a problem to be missing a small fraction of them.

The regions in orange are a little more concerning; these are the Type I errors, or “false positives.” These are images that should be discarded from analysis because there is no useful information in them for the research we want to do. But our algorithm identifies a species in the images anyway. These may be some of the hardest captures to deal with as we work on our algorithm.

And the red-colored errors are obviously a concern, too. The next step is to incorporate some smarts into our simple algorithm. Information about camera location, time of day, and identification of species in captures immediately before or following a capture can give us additional information to try to get that 4.2% non-agreement even smaller.

Drumroll, please

If you’ve been following Margaret’s blogs, you’ve known this moment was coming. So stop what you’re doing, put down your pens and pencils, and open up your internet browsers, folks, because Season 5 is here!

It’s been an admittedly long wait. Season 5 represents photos from June – December 2012. During those six months I was back here in Minnesota, working with Margaret and the amazing team at Zooniverse to launch Snapshot Serengeti; meanwhile, in Serengeti, Stanslaus Mwampeta was working hard to keep the camera trap survey going. I mailed the Season 5 photos back as soon as possible after returning to Serengeti – but the vagaries of cross-continental postal service were against us, and it took nearly 5 months to get these images from Serengeti to Minnesota, where they could be prepped for the Snapshot interface.

So now that you’ve finally kicked the habit, get ready to dive back in. As with Season 4, the photos in Season 5 have never been seen before. Your eyes are the first. And you might see some really exciting things.

For starters, you won’t see as many wildebeest. By now, they’ve moved back to the north – northern Serengeti as well as Kenya’s Masaai Mara – where more frequent rains keep the grass long and lush year-round. Here, June marks the onslaught of the dry season. From June through October, if not later, everything is covered in a relentless layer of dust. After three months without a drop of rain, we start to wonder if the water in our six 3,000 liter tanks will last us another two months. We ration laundry to one dusty load a week, and showers to every few field days. We’ve always made it through so far, but sometimes barely…and often rather smelly.

You might see Stan

Norbert

And occasionally Daniel

Or me

Checking the camera traps.

But most excitingly, you might see African wild dogs.

photo nabbed from: http://en.wikipedia.org/wiki/File:LycaonPictus.jpg

Also known as the Cape hunting dog or painted hunting dog, these canines disappeared from Serengeti in the early 1990’s. While various factors may have contributed to their decline, wild dog populations have lurked just outside the Serengeti, in multi-use protected areas (e.g. with people, cows, and few lions) for at least 10 years. Many researchers suspect that wild dogs have failed to recolonize their previous home-ranges inside the park because lion populations have nearly tripled – and as you saw in “Big, Mean, & Nasty”, lions do not make living easy for African wild dogs.

Nonetheless, the Tanzanian government has initiated a wild dog relocation program that hopes to bring wild dogs back to Serengeti, where they thrived several decades ago. In August 2012, and again in December, the Serengeti National Park authorities released a total of 29 wild dogs in the western corridor of the park. While the release area is well outside of the camera survey area, rumor has it that the dogs booked it across the park, through the camera survey, on their journey to the hills of Loliondo. Only a handful of people have seen these newly released dogs in person, but it’s possible they’ve been caught on camera. So keep your eyes peeled! And if you see something that might be a wild dog, please tag it with #wild-dog!! Happy hunting!

Living with lions

A few weeks ago, I wrote about how awful lions are to other large carnivores. Basically, lions harass, steal food from, and even kill hyenas, cheetahs, leopards, and wild dogs. Their aggression usually has no visible justification (e.g. they don’t eat the cheetahs they kill), but can have devastating effects. One of my main research goals is to understand how hyenas, leopards, cheetahs, and wild dogs survive with lions. As I mentioned the other week, I think the secret may lie in how these smaller carnivores use the landscape to avoid interacting with lions.

Top predators (the big ones doing the chasing and killing) can create what we call a “landscape of fear” that essentially reduces the amount of land available to smaller predators. Smaller predators are so afraid of encountering the big guys that they avoid using large chunks of the landscape altogether. One of my favorite illustrations of this pattern is the map below, which shows how swift foxes restrict their territories to the no-man’s land between coyote territories.

A map of coyote and swift fox territories in Texas. Foxes are so afraid of encountering coyotes that they restrict their territories into the spaces between coyote ranges.

The habitat inside the coyote territories is just as good, if not better, for the foxes, but the risk of encountering a coyote is too great. By restricting their habitat use to the areas outside coyote territories, swift foxes have essentially suffered from habitat loss, meaning that they have less land and fewer resources to support their population. There’s growing evidence that this effective habitat loss may be the mechanism driving suppression in smaller predators. In fact, this habitat loss may have larger consequences on a population than direct killing by the top predator!

While some animals are displaced from large areas, others may be able to avoid top predators at a much finer scale. They may still use the same general areas, but use scent or noise to avoid actually running into a lion (or coyote). This is called fine-scale avoidance, and I think animals that can achieve fine-scale avoidance, instead of suffering from large-scale displacement, manage to coexist.

The camera traps are, fingers crossed, going to help me understand at what scale hyenas, leopards, cheetahs, and wild dogs avoid lions. My general hypothesis is that if these species are generally displaced from lion territories, and suffer effective habitat loss, their populations should decline as lion populations grow. If instead they are able to use the land within lion territories, avoiding lions by shifting their patterns of habitat use or changing the time of day they are active, then I expect them to coexist with lions pretty well.

So what have we seen so far? Stay tuned – I’ll share some preliminary results next week!

#####

Map adapted from: Kamler, J.F., Ballard, W.B., Gilliland, R.L., and Mote, K. (2003b). Spatial relationships between swift foxes and coyotes in northwestern Texas. Canadian Journal of Zoology 81, 168–172.