Eat food. Don’t be food.

Imagine you are an impala.

You’re hungry. You want to go find some lovely nice grass to graze, and you know where the tastiest grass is. The only problem is that every time you go over to taste that best grass, you smell lion. And, well, that’s a little scary. So what do you do? Take the chance and go nibble the tastiest of tasty grasses? Or go elsewhere where the grass isn’t quite as nice?

This conflict for herbivores between finding the most nutritious food available and not becoming food is the basis for some of our research questions. We know that lions prioritize certain areas for hunting. In fact, former Lion Research Center researcher Anna Mosser discovered that lions set up their territories near where rivers and streams come together. Here there is open water where herbivores may come to drink and lots of green coffee leaves and vegetation (which is good eating for herbivores, but also provides a place for lions to hide and stalk those herbivores).

We know what the lions do. But what we don’t really know is what sort of decisions the herbivores make. The answer to this question likely depends on the answers to some other questions. We might first ask: what does the distribution of grass look like out in the Serengeti? If it’s the wet season and there’s good grass all around, perhaps we’d expect that herbivores would tend to avoid places with lots of lions. But if it’s the dry season and the only good places to eat are near rivers, then maybe the herbivores are forced to eat near lions so they don’t starve.

Or, we might ask: for any given herbivore species, how likely is it to be attacked by lions? Very large herbivores – like hippos, elephants, and giraffes – are a lot less likely to be attacked by lions than their mid-sized relatives. So maybe these big herbivores don’t care very much about whether they’re eating near lions or not.

We also have to ask the question of whether the herbivores can even tell which areas have a lot of lions and which don’t. If they can’t tell where the lions are, then we’d expect them to spread out, with more herbivores in areas of better foliage and fewer animals where the foliage isn’t so good.

The data you’re giving us through Snapshot Serengeti will help us understand the choices herbivores are making. We’ll be able to map the distributions of lots of different herbivore species. Then we’ll compare the distributions with the areas with the best greenery and the areas where lions congregate. We’ll be able to see if different herbivore species distribute themselves in different ways. And we’ll be able to see, over time, how these herbivore distributions change with dry season, wet season, droughts, and floods.

We need an ‘I don’t know’ button!

Okay, okay. I hear you. I know it’s really frustrating when you get an image with a partial flank or a far away beast or maybe just an ear tip. I recognize that you can’t tell for sure what that animal is. But part of why people are better at this sort of identification process than computers is that you can figure out partial information; you can narrow down your guess. That partial flank has short brown hair with no stripes or bars. And it’s tall enough that you can rule out all the short critters. Well, now you’ve really narrowed it down quite a lot. Can you be sure it’s a wildebeest and not a buffalo? No. But by taking a good guess, you’ve provided us with real, solid information.

We show each image to multiple people. Based on how much the first several people agree, we may show the image to many more people. And when we take everyone’s identifications into account, we get the right answer. Let me show you some examples to make this clearer. Here’s an easy one:

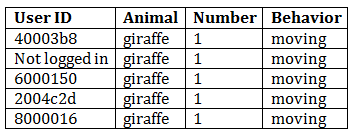

And if we look at how this got classified, we’re not surprised:

I don’t even have to look at the picture. If you hid it from me and only gave me the data, I would tell you that I am 100% certain that there is one moving giraffe in that image.

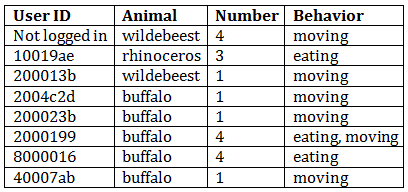

Okay, let’s take a harder image and its classifications:

This image is, in fact, of buffalo – at least the one on the foreground is, and it’s reasonable to assume the others are, too. Our algorithm would also conclude from the data table that this image is almost certainly of buffalo – 63% of classifiers agreed on that, and the other three classifications are ones that are easily confused with buffalo. We can also figure out from the data you’ve provided us that the buffalo are likely eating and moving, and that there is one obvious buffalo and another 2 or 3 ones that are harder to tell.

My point in showing you this example is that even with fairly difficult images, you (as a group) get it right! If you (personally) mess up an image here or there, it’s no big deal. If you’re having trouble deciding between two animals, pick one – you’ll probably be right.

Now what if we had allowed people to have an ‘I don’t know’ button for this last image? I bet that half of them would have pressed, ‘I don’t know.’ We’d be left with just 4 identifications and would need to send out this hard image to even more people. Then half of those people would click ‘I don’t know’ and we’d have to send it out to more people. You see where I’m going with this? An ‘I don’t know’ button would guarantee that you would get many, many more annoying, frustrating, and difficult images because other people would have clicked ‘I don’t know.’ When we don’t have an ‘I don’t know’ button, you give us some information about the image, and that information allows us to figure out each image faster – even the difficult ones.

“Fine, fine,” you might be saying. “But seriously, some of those images are impossible. Don’t you want to know that?”

Well, yes, we do want to know that. But it turns out that when you guess an animal and press “identify” on an impossible image, you do tell us that. Or, rather, as a group, you do. Let’s look at one:

Now I freely admit that it is impossible to accurately identify this animal. What do you guys say? Well…

Right. So there is one animal moving. And the guesses as to what that animal is are all over the place. So we don’t know. But wait! We do know a little; all those guesses are of small animals, so we can conclude that there is one small animal moving. Is that useful to our research? Maybe. If we’re looking at hyena and leopard ranging patterns, for example, we know whatever animal got caught in this image is not one we have to worry about.

So, yes, I know you’d love to have an ‘I don’t know’ button. I, myself, have volunteered on other Zooniverse projects and have wished to be able to say that I really can’t tell what kind of galaxy that is or what type of cyclone I’m looking at. But in the end, not having that button there means that you get fewer of the annoying, difficult images, and also that we get the right answers, even for impossible images.

So go ahead. Make a guess on that tough one. We’ll thank you.

Welcome to Snapshot Serengeti

Hi! And welcome to Snapshot Serengeti. We are all incredibly excited to be working with you to turn photographs into scientific discoveries. You might be wondering what this is all about, so let me start with some introductions. This is Ali:

Ali is a researcher at the University of Minnesota. She studies the big carnivores (lions, hyena, cheetahs, and leopards) in the Serengeti. Every year she flies to Tanzania, loads up on supplies in Arusha, and then drives for a day – mostly on dirt roads – out into Serengeti National Park.

Craig is a professor at the University of Minnesota and Ali’s advisor. He runs the Lion Research Center has been studying lions out in the Serengeti for decades. He has radio collars on lions in many prides, which allows him to keep track of lots individual lions over many years.

This is Daniel:

And this is Stan:

They are field assistants who work for Craig out in the Serengeti. Daniel is responsible for driving around and finding lions, while taking pictures of them and recording lots of information about what he sees. Stan is responsible for going out to the camera traps, making sure they’re still working fine, and changing the cameras’ memory cards when they fill up. Daniel and Stan live in Serengeti year-round at Lion House, where facilities are basic, but the scenery is amazing.

When Ali goes out the Serengeti, she stays at Lion House, too. Once she’s there, she makes observations that help her understand the big carnivores. A couple years ago, she installed a bunch of camera traps so she could see where the carnivores roamed when she wasn’t present. The cameras worked really well and the images were so useful that she installed some more. Now there are 225 of these cameras automatically taking pictures out the Serengeti!

My name is Margaret. This is me:

Like Ali, I’m a researcher at the University of Minnesota, and Craig is my advisor, too. Ali became inundated with the images the camera traps produced – a million per year! I have a reputation around here as a computer fundi – a Swahili word that translates as ‘master’ or ‘expert’ – and Ali asked me if there was a way to automate the process of turning images into data. See, the images by themselves aren’t that useful for research; Ali needs to know what species are in the pictures so she can do her analyses. For example, if she knows which images contain wildebeest and zebra, she can use that data put together a map that shows their density across the landscape. (The size of the circles show how many wildebeest and zebra there are in various places — bigger circles mean more wildebeest and zebra.)

Unfortunately, I had to tell her that computers aren’t that good yet. They can’t yet reliably pick out objects from a picture, except under very controlled situations. But human eyes are remarkable in their ability to find objects in images. As I started looking through Ali’s images, I was blown away by how beautiful many of them are. And I wondered if we could ask for help from people. Lots of people. Hundreds. Thousands. So we started to think about how to do that.

Unfortunately, I had to tell her that computers aren’t that good yet. They can’t yet reliably pick out objects from a picture, except under very controlled situations. But human eyes are remarkable in their ability to find objects in images. As I started looking through Ali’s images, I was blown away by how beautiful many of them are. And I wondered if we could ask for help from people. Lots of people. Hundreds. Thousands. So we started to think about how to do that.

The end result is Snapshot Serengeti, a collaboration with Zooniverse. We’d like to ask you to help us turn all these pictures from the Serengeti into scientific data by identifying what animals are in the images and what they’re doing. And in this blog, we will keep you updated on how the project is progressing, share cool information about the Serengeti and African wildlife, as well as hopefully answer a lot of questions you may have about animal behavior, ecology, and science in general.

So, check out the camera trap images. Tell us what you see in them. And let us know if you have questions. Thanks! You can get started by clicking here.